인프런 커뮤니티 질문&답변

npx sst dev 실행시

해결된 질문

작성

·

835

0

npx sst dev 실행시

It seems that your package manager failed to install the right version of the SST CLI for your platform. You can try manually installing the "sst-win32-x64" package.이런 오류가 나오는데 뭐가 문제인지 알수있을까요?

답변 10

0

0

=> ERROR [11/18] RUN python3 download.py 30.5s

------

> [11/18] RUN python3 download.py:

3.181 The cache for model files in Transformers v4.22.0 has been updated. Migrating your old cache. This is a one-time only operation. You can interrupt this and resume the migration later on by calling transformers.utils.move_cache().

0it [00:00, ?it/s]

28.48 /opt/conda/lib/python3.8/site-packages/torchvision/io/image.py:13: UserWarning: Failed to load image Python extension: libtorch_cuda_cu.so: cannot open shared object file: No such file or directory

28.48 warn(f"Failed to load image Python extension: {e}")

28.48 /opt/conda/lib/python3.8/site-packages/xformers/ops/fmha/flash.py:211: FutureWarning: torch.library.impl_abstract was renamed to torch.library.register_fake. Please use that instead; we will remove torch.library.impl_abstract in a future version of PyTorch.

28.48 @torch.library.impl_abstract("xformers_flash::flash_fwd")

28.48 /opt/conda/lib/python3.8/site-packages/xformers/ops/fmha/flash.py:344: FutureWarning: torch.library.impl_abstract was renamed to torch.library.register_fake. Please use that instead; we will remove torch.library.impl_abstract in a future version of PyTorch.

28.48 @torch.library.impl_abstract("xformers_flash::flash_bwd")

28.48 Couldn't connect to the Hub: 401 Client Error. (Request ID: Root=1-66e0111d-334700fb4380a00a752bdb0e;46c20cef-fce5-4884-861c-da7ab442c775)

28.48

28.48 Repository Not Found for url: https://huggingface.co/api/models/runwayml/stable-diffusion-v1-5.

28.48 Please make sure you specified the correct repo_id and repo_type.

28.48 If you are trying to access a private or gated repo, make sure you are authenticated.

28.48 Invalid username or password..

28.48 Will try to load from local cache.

28.48 downloading reg images...

28.49 Traceback (most recent call last):

28.49 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/utils/_errors.py", line 304, in hf_raise_for_status

28.49 response.raise_for_status()

28.49 File "/opt/conda/lib/python3.8/site-packages/requests/models.py", line 1024, in raise_for_status

28.49 raise HTTPError(http_error_msg, response=self)

28.49 requests.exceptions.HTTPError: 401 Client Error: Unauthorized for url: https://huggingface.co/api/models/runwayml/stable-diffusion-v1-5

28.49

28.49 The above exception was the direct cause of the following exception:

28.49

28.49 Traceback (most recent call last):

28.49 File "/opt/conda/lib/python3.8/site-packages/diffusers/pipelines/pipeline_utils.py", line 1291, in download

28.49 info = model_info(pretrained_model_name, token=token, revision=revision)

28.49 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/utils/_validators.py", line 114, in innerfn

28.49 return fn(*args, **kwargs)

28.49 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/hf_api.py", line 2373, in model_info

28.49 hf_raise_for_status(r)

28.49 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/utils/_errors.py", line 352, in hf_raise_for_status

28.49 raise RepositoryNotFoundError(message, response) from e

28.49 huggingface_hub.utils._errors.RepositoryNotFoundError: 401 Client Error. (Request ID: Root=1-66e0111d-334700fb4380a00a752bdb0e;46c20cef-fce5-4884-861c-da7ab442c775)

28.49

28.49 Repository Not Found for url: https://huggingface.co/api/models/runwayml/stable-diffusion-v1-5.

28.49 Please make sure you specified the correct repo_id and repo_type.

28.49 If you are trying to access a private or gated repo, make sure you are authenticated.

28.49 Invalid username or password.

28.49

28.49 The above exception was the direct cause of the following exception:

28.49

28.49 Traceback (most recent call last):

28.49 File "download.py", line 64, in <module>

28.49 download_model()

28.49 File "download.py", line 30, in download_model

28.49 pipe = StableDiffusionPipeline.from_pretrained(

28.49 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/utils/_validators.py", line 114, in innerfn

28.49 return fn(*args, **kwargs)

28.49 File "/opt/conda/lib/python3.8/site-packages/diffusers/pipelines/pipeline_utils.py", line 699, in from_pretrained

28.49 cached_folder = cls.download(

28.49 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/utils/_validators.py", line 114, in innerfn

28.49 return fn(*args, **kwargs)

28.49 File "/opt/conda/lib/python3.8/site-packages/diffusers/pipelines/pipeline_utils.py", line 1536, in download

28.49 raise EnvironmentError(

28.49 OSError: Cannot load model runwayml/stable-diffusion-v1-5: model is not cached locally and an error occurred while trying to fetch metadata from the Hub. Please check out the root cause in the stacktrace above. 도커 빌드를 실행하면 이 오류가 나오는데 허깅페이스 토큰은 올바르게 적용했는데도 불구하고 에러가 납니다 ㅠㅠ 해결법이 있을까요?

새로운 디퓨전 모델에 권한도 받고 해당 디퓨전 모델로 모두 수정 하였는데

=> ERROR [12/18] RUN python3 download.py 6.9s

------

> [12/18] RUN python3 download.py:

3.041 The cache for model files in Transformers v4.22.0 has been updated. Migrating your old cache. This is a one-time only operation. You can interrupt this and resume the migration later on by calling transformers.utils.move_cache().

0it [00:00, ?it/s]

6.105 /opt/conda/lib/python3.8/site-packages/torchvision/io/image.py:13: UserWarning: Failed to load image Python extension: libtorch_cuda_cu.so: cannot open shared object file: No such file or directory

6.105 warn(f"Failed to load image Python extension: {e}")

6.105 /opt/conda/lib/python3.8/site-packages/xformers/ops/fmha/flash.py:211: FutureWarning: torch.library.impl_abstract was renamed to torch.library.register_fake. Please use that instead; we will remove torch.library.impl_abstract in a future version of PyTorch.

6.105 @torch.library.impl_abstract("xformers_flash::flash_fwd")

6.105 /opt/conda/lib/python3.8/site-packages/xformers/ops/fmha/flash.py:344: FutureWarning: torch.library.impl_abstract was renamed to torch.library.register_fake. Please use that instead; we will remove torch.library.impl_abstract in a future version of PyTorch.

6.105 @torch.library.impl_abstract("xformers_flash::flash_bwd")

6.106 Traceback (most recent call last):

6.106 File "download.py", line 7, in <module>

6.106 from diffusers.models import AutoencoderKLf

6.106 ImportError: cannot import name 'AutoencoderKLf' from 'diffusers.models' (/opt/conda/lib/python3.8/site-packages/diffusers/models/__init__.py)

------

5 warnings found (use docker --debug to expand):

- SecretsUsedInArgOrEnv: Do not use ARG or ENV instructions for sensitive data (ENV "BANANA_SECRET_KEY") (line 12)

- JSONArgsRecommended: JSON arguments recommended for CMD to prevent unintended behavior related to OS signals (line 47)

- SecretsUsedInArgOrEnv: Do not use ARG or ENV instructions for sensitive data (ENV "HF_AUTH_TOKEN") (line 8)

- SecretsUsedInArgOrEnv: Do not use ARG or ENV instructions for sensitive data (ENV "AWS_ACCESS_KEY_ID") (line 9)

- SecretsUsedInArgOrEnv: Do not use ARG or ENV instructions for sensitive data (ENV "AWS_SECRET_ACCESS_KEY") (line 10)

Dockerfile:31

--------------------

29 | ADD s3_file_manager.py .

30 | ADD download.py .

31 | >>> RUN python3 download.py

32 |

33 | ADD convert_diffusers_to_original_stable_diffusion.py .

--------------------

ERROR: failed to solve: process "/bin/sh -c python3 download.py" did not complete successfully: exit code: 1

View build details: docker-desktop://dashboard/build/desktop-linux/desktop-linux/sb7s2sbbvegteyob8f874vw4z 이런 오류가 나옵니다.

이전보다 오류는 줄은것 같은데 뭐가 문제인걸까요?

File "download.py", line 7, in <module>

6.106 from diffusers.models import AutoencoderKLf

위 구문을 보면 download.py 7번째 줄에 맨 끝에 AutoencoderKL"f"라고 되어있는데, 원래 AutoencoderKL입니다. 마지막 f를 지우고 진행해주세요.

F지우고도 해보았는데

=> ERROR [12/18] RUN python3 download.py 27.4s

------

> [12/18] RUN python3 download.py:

2.928 The cache for model files in Transformers v4.22.0 has been updated. Migrating your old cache. This is a one-time only operation. You can interrupt this and resume the migration later on by calling transformers.utils.move_cache().

0it [00:00, ?it/s]downloading reg images...

25.44

25.44 /opt/conda/lib/python3.8/site-packages/torchvision/io/image.py:13: UserWarning: Failed to load image Python extension: libtorch_cuda_cu.so: cannot open shared object file: No such file or directory

25.44 warn(f"Failed to load image Python extension: {e}")

25.44 /opt/conda/lib/python3.8/site-packages/xformers/ops/fmha/flash.py:211: FutureWarning: torch.library.impl_abstract was renamed to torch.library.register_fake. Please use that instead; we will remove torch.library.impl_abstract in a future version of PyTorch.

25.44 @torch.library.impl_abstract("xformers_flash::flash_fwd")

25.44 /opt/conda/lib/python3.8/site-packages/xformers/ops/fmha/flash.py:344: FutureWarning: torch.library.impl_abstract was renamed to torch.library.register_fake. Please use that instead; we will remove torch.library.impl_abstract in a future version of PyTorch.

25.44 @torch.library.impl_abstract("xformers_flash::flash_bwd")

25.46 Traceback (most recent call last):

25.46 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/utils/_errors.py", line 304, in hf_raise_for_status

25.46 response.raise_for_status()

25.46 File "/opt/conda/lib/python3.8/site-packages/requests/models.py", line 1024, in raise_for_status

25.46 raise HTTPError(http_error_msg, response=self)

25.46 requests.exceptions.HTTPError: 401 Client Error: Unauthorized for url: https://huggingface.co/benjamin-paine/stable-diffusion-v1-5/resolve/main/model_index.json

25.46

25.46 The above exception was the direct cause of the following exception:

25.46

25.46 Traceback (most recent call last):

25.46 File "download.py", line 64, in <module>

25.46 download_model()

25.46 File "download.py", line 30, in download_model

25.46 pipe = StableDiffusionPipeline.from_pretrained(

25.46 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/utils/_validators.py", line 114, in innerfn

25.46 return fn(*args, **kwargs)

25.46 File "/opt/conda/lib/python3.8/site-packages/diffusers/pipelines/pipeline_utils.py", line 699, in from_pretrained

25.46 cached_folder = cls.download(

25.46 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/utils/_validators.py", line 114, in innerfn

25.46 return fn(*args, **kwargs)

25.46 File "/opt/conda/lib/python3.8/site-packages/diffusers/pipelines/pipeline_utils.py", line 1298, in download

25.46 config_file = hf_hub_download(

25.46 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/utils/_deprecation.py", line 101, in inner_f

25.46 return f(*args, **kwargs)

25.46 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/utils/_validators.py", line 114, in innerfn

25.46 return fn(*args, **kwargs)

25.46 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/file_download.py", line 1240, in hf_hub_download

25.46 return hfhub_download_to_cache_dir(

25.46 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/file_download.py", line 1347, in hfhub_download_to_cache_dir

25.46 raiseon_head_call_error(head_call_error, force_download, local_files_only)

25.46 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/file_download.py", line 1854, in raiseon_head_call_error

25.46 raise head_call_error

25.46 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/file_download.py", line 1751, in getmetadata_or_catch_error

25.46 metadata = get_hf_file_metadata(

25.46 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/utils/_validators.py", line 114, in innerfn

25.46 return fn(*args, **kwargs)

25.46 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/file_download.py", line 1673, in get_hf_file_metadata

25.46 r = requestwrapper(

25.46 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/file_download.py", line 376, in requestwrapper

25.46 response = requestwrapper(

25.46 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/file_download.py", line 400, in requestwrapper

25.46 hf_raise_for_status(response)

25.46 File "/opt/conda/lib/python3.8/site-packages/huggingface_hub/utils/_errors.py", line 321, in hf_raise_for_status

25.46 raise GatedRepoError(message, response) from e

25.46 huggingface_hub.utils._errors.GatedRepoError: 401 Client Error. (Request ID: Root=1-66e14d3b-6ab28f8d21fa1d6a0c7187d9;4e0be24f-790c-4683-9f08-a9fd7974a122)

25.46

25.46 Cannot access gated repo for url https://huggingface.co/benjamin-paine/stable-diffusion-v1-5/resolve/main/model_index.json.

25.46 Access to model benjamin-paine/stable-diffusion-v1-5 is restricted. You must have access to it and be authenticated to access it. Please log in.

------

5 warnings found (use docker --debug to expand):

- SecretsUsedInArgOrEnv: Do not use ARG or ENV instructions for sensitive data (ENV "HF_AUTH_TOKEN") (line 8)

- SecretsUsedInArgOrEnv: Do not use ARG or ENV instructions for sensitive data (ENV "AWS_ACCESS_KEY_ID") (line 9)

- SecretsUsedInArgOrEnv: Do not use ARG or ENV instructions for sensitive data (ENV "AWS_SECRET_ACCESS_KEY") (line 10)

- SecretsUsedInArgOrEnv: Do not use ARG or ENV instructions for sensitive data (ENV "BANANA_SECRET_KEY") (line 12)

- JSONArgsRecommended: JSON arguments recommended for CMD to prevent unintended behavior related to OS signals (line 47)

Dockerfile:31

--------------------

29 | ADD s3_file_manager.py .

30 | ADD download.py .

31 | >>> RUN python3 download.py

32 |

33 | ADD convert_diffusers_to_original_stable_diffusion.py .

--------------------

ERROR: failed to solve: process "/bin/sh -c python3 download.py" did not complete successfully: exit code: 1

View build details: docker-desktop://dashboard/build/desktop-linux/desktop-linux/u3u4g7znhaiyiy532b4expo8x 이 오류가 나옵니다

0

안녕하세요 선생님 계속 질문드려서 죄송합니다 ㅠㅠ

=> ERROR [ 1/18] FROM docker.io/pytorch/pytorch:1.11.0-cuda11.3-cudnn8-runtime@sha256:9904a7e081eaca29e3ee46afac87f2879676dd3bf7b5e9b8450454d84e074ef0

도커 빌드를 하면 이 에러가 떠서 해결방안이 있을까요??

0

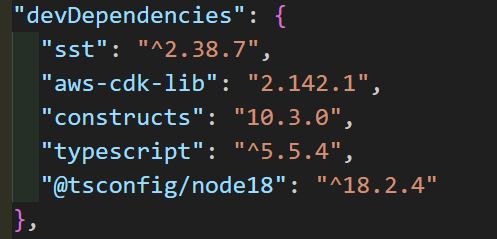

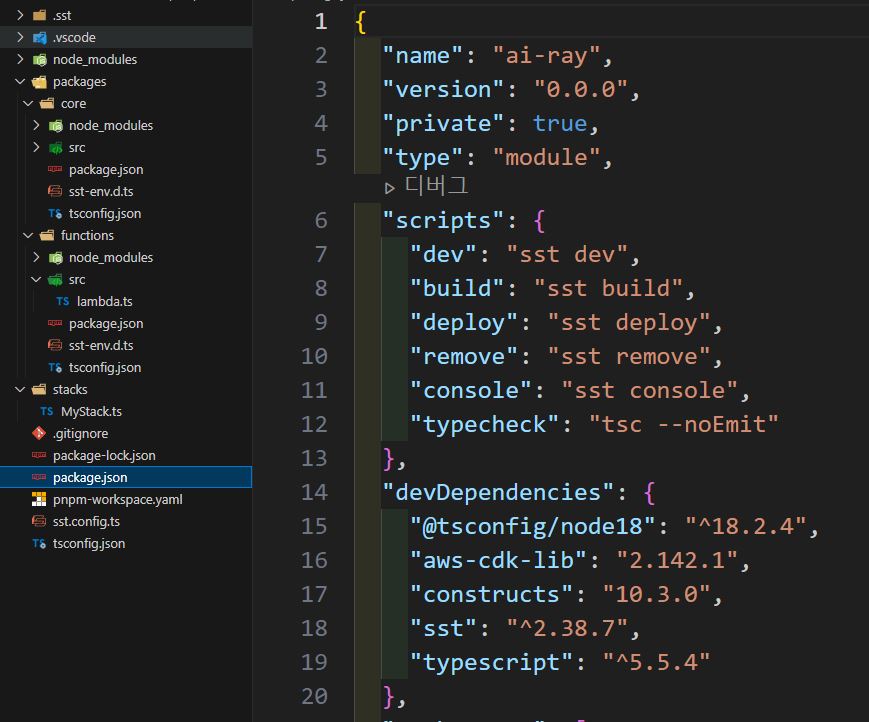

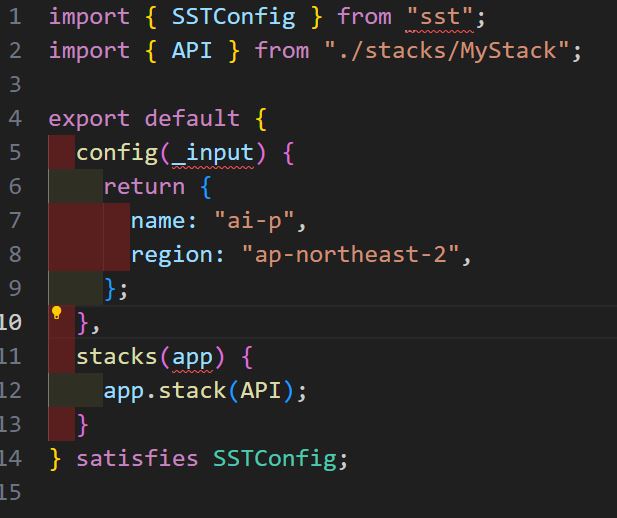

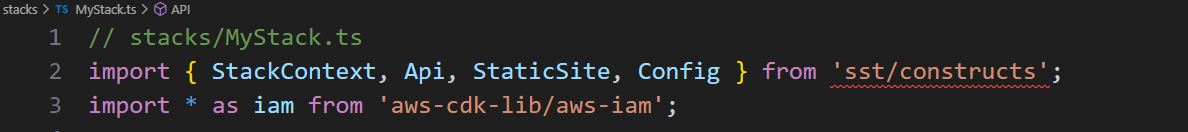

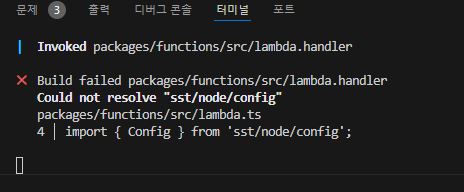

윈도우로 테스트해보니 npx create-sst@two {project_name}로 실행해도 sst v3 기반으로 설치되는 버그가 있는 것을 확인했습니다!

강의 노트에도 업데이트 하겠지만 순서를 말씀드리면

1. npx create-sst@two {project_name}

2. 프로젝트 루트로 이동하여 package.json 열기

devDependencies의

"sst":를"^2.38.7"로 변경npm install하시고 원래 강의대로 따라하시면 에러가 없는 것을 확인했습니다.

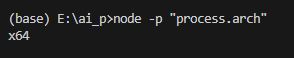

더불어 nvm을 활용하여 x64 버전의 노드를 사용하고 계신지 꼭 확인해주세요!

강의 들으시는데 불편함을 드려 죄송합니다.

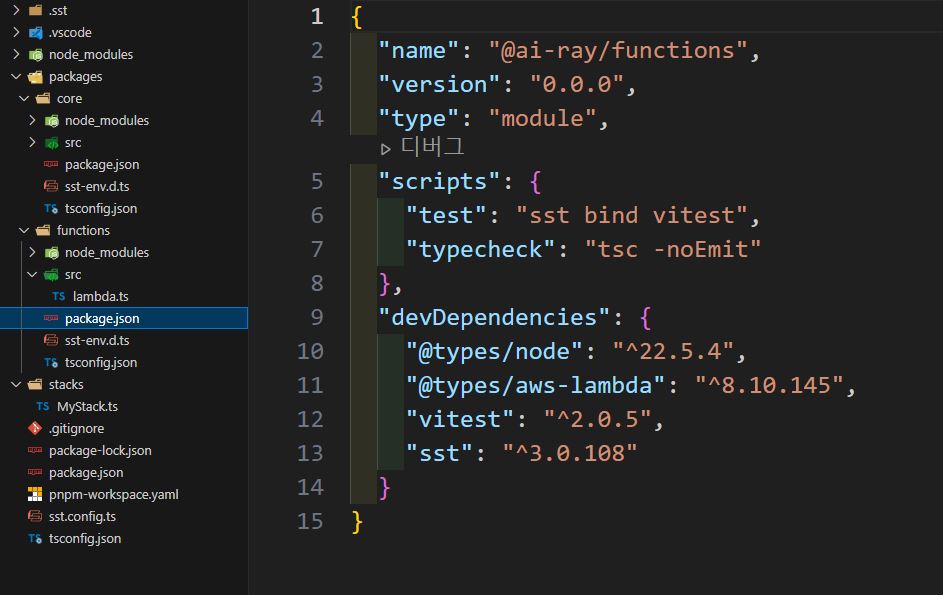

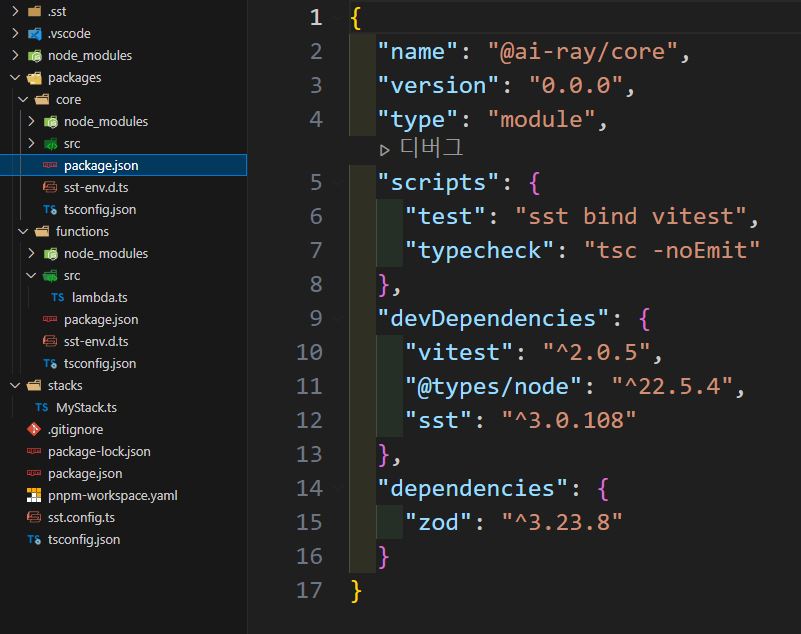

위의 내용을 다 확인하고 실행했는데

펑션 폴더랑 코어 폴더는 sst가 3.0.108 인데 혹시 이거랑 연관있을까요?

위의 내용 다 적용하고 확인하고 했는데 아직 저런 오류가 나와서 문의드려요

function과 core도 모두 2.38.7 버전으로 바꾼 뒤 npm install을 진행해보시겠어요?

관련 이슈는 깃헙에 올라와있습니다.

https://github.com/sst/sst/issues/3844#issue-2484691265

sst 기여자들도 이 문제를 인지하고 있습니다. 아직 해결이 되지 않아 복잡한 과정을 지나야하네요ㅠ

0

0

0

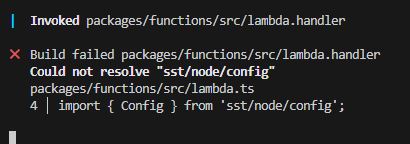

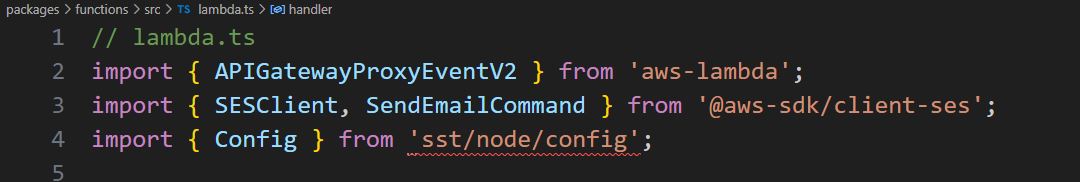

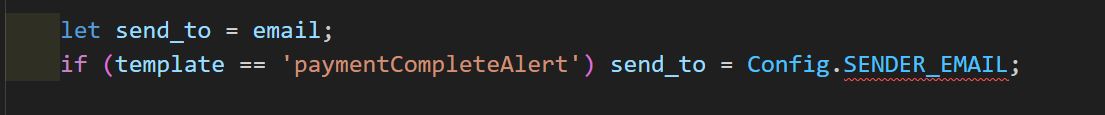

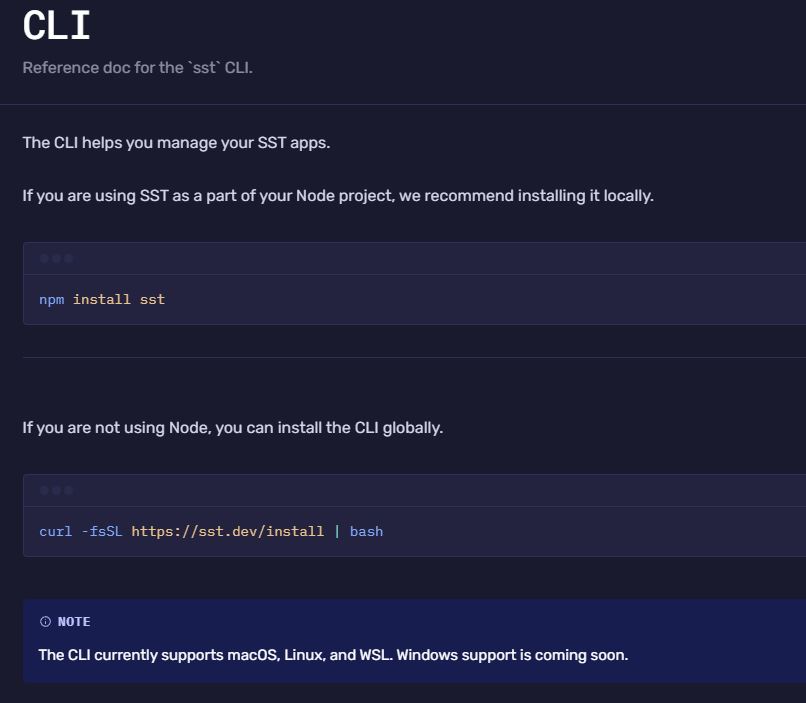

선생님 혹시

npx sst dev 를하고 dev 를 하면

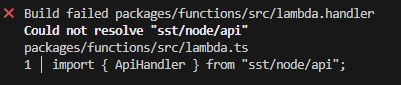

Invoked packages/functions/src/lambda.handler ✖ Build failed packages/functions/src/lambda.handler Could not resolve "sst/node/api" packages/functions/src/lambda.ts 1 │ import { ApiHandler } from "sst/node/api" 이게 뜨는데 무슨 문제인지 알수았을까요?

혹시

import { ApiHandler } from "sst/node/api"; 이거 대신에

import { ApiHandler } from "@serverless-stack/node/api";

이렇게 하면 잘 테스트가 잘 작동 되는데 이렇게 해도 상관 없을까요?

잘 작동하신다면 그대로 사용하셔도 될 것 같습니다.

스크린샷을 요청드린 이유는 해당 명령어를 수행하신 디렉토리를 알기 위함이었는데, 프로젝트 루트 디렉토리에서 실행하신게 맞으신가요?

0

원래 npx를 사용해서 sst를 자동으로 설치하는데요. 설치에 문제가 있어 수동으로 설치를 하고 진행하셔야할 것 같습니다. 강의에서는 sst v2를 사용하는데요. 아래 명령어로 설치를 한 후에 npx sst dev를 실행해보실 수 있을까요?

npm install sst@two --save-exact

# 설치 후

npx sst dev0

그보다는 cpu나 운영체제가 조금 특별할 것 같은데요. 그것도 알려주실 수 있을까요?

혹은 nvm을 사용하지 않고 계시다면 사용하고 계시는 node 버전을 알려주실 수 있나요?

노드 버전은 v18.20.4 이구 cpu는

Intel(R) Core(TM) i5-6600K CPU @ 3.50GHz 3.50 GHz 이고

운영체제는 64비트 입니다

0

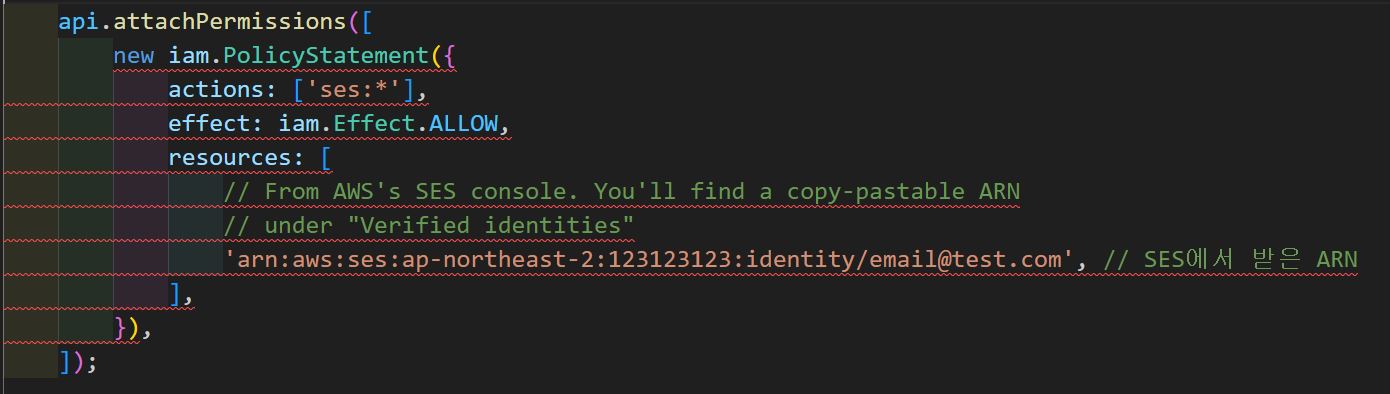

불과 며칠전에 runwayml에서 1.5버전의 stable diffusion 모델을 삭제하였습니다. 그래서 모델의 리포를 찾을 수 없어 나온 에러 같습니다.

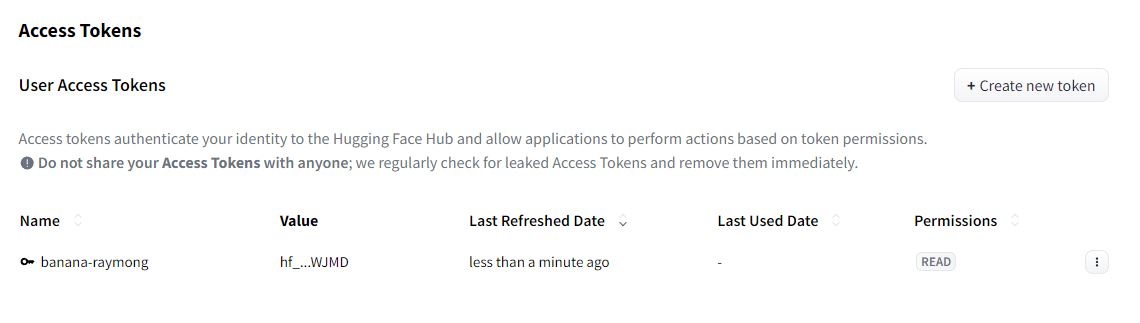

백업으로 benjamin-paine/stable-diffusion-v1-5를 사용하면 해결될 것 같습니다.

그 방법은 vscode에서 검색창을 여시고

runwayml/stable-diffusion-v1-5를 검색하신 다음benjamin-paine/stable-diffusion-v1-5로 모두 바꾸기를 해주시면 됩니다. 첨부한 이미지 참고하여 작업해주세요!